The pitch for autonomous Oracle support sounds compelling: AI diagnoses incidents, retrieves the right Oracle Support notes, formulates the fix, and executes it — no human involvement required. Incidents resolved in minutes, not hours. The on-call DBA sleeping through the night. The AP team's period close running smoothly without a single support call.

The pitch is correct about the benefits and wrong about the architecture required to get them. Full autonomy — AI proposing and executing fixes without any human approval gate — is not the right design for Oracle ERP production environments. The risk profile is wrong, the compliance requirements are unsatisfied, and the failure modes are unacceptable. The Oracle AI platform is built around a different principle: autonomy everywhere it is safe, human oversight exactly where it is required, and a governance layer that enforces the boundary architecturally rather than by policy.

The Problem With Full Autonomy

Consider what "full autonomy" means in an Oracle EBS Accounts Payable environment. The AI platform diagnoses an ORA-01652 error and determines that extending the TEMP tablespace will resolve it. Full autonomy means the platform executes ALTER SYSTEM without asking anyone. If the AI is right, the problem is solved. But what if the AI is wrong — or right about the root cause but wrong about the safe fix path?

An ALTER SYSTEM command issued in the wrong context can destabilize a running database. A data update issued against the wrong table can corrupt financial records. An automated AP hold release applied to an invoice that was legitimately on hold for a fraud review removes a control that exists for a reason. These are not theoretical failure modes. They are the categories of mistakes that manual DBA processes are designed to prevent — and that an autonomous AI system, operating without oversight, can reproduce at machine speed.

There is also the compliance dimension. SOX Section 404 and ITGC Change Management controls require documented evidence that system changes were reviewed and approved by an authorized person before execution. A system that executes changes autonomously — even if correct — cannot produce this evidence. Full autonomy and SOX compliance are structurally incompatible.

The Traffic Light Framework

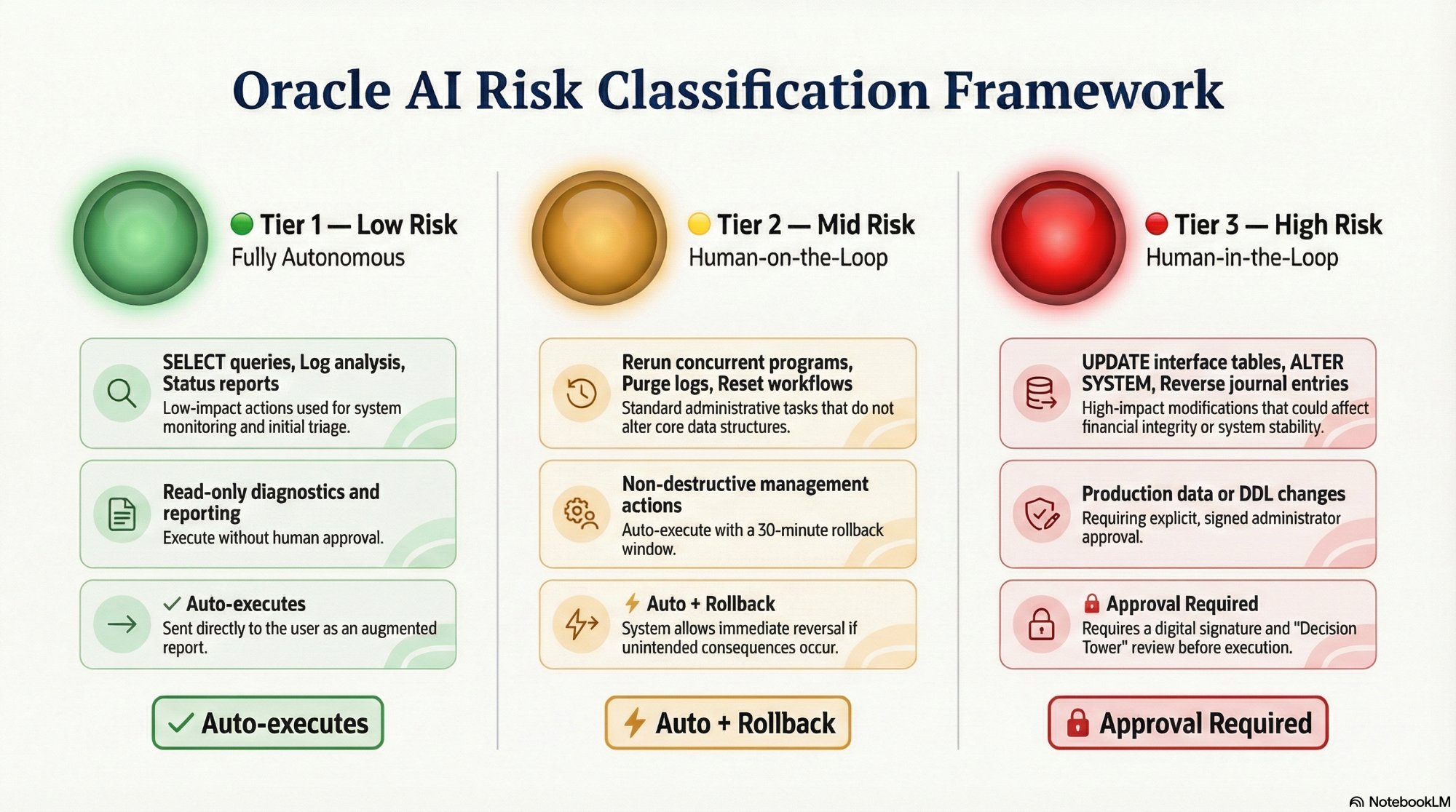

The Oracle AI platform resolves this by classifying every action into one of three risk tiers before it executes anything. The classification happens at triage — when the LLM Router first processes the incident — and determines the entire approval path for that incident.

Green tier — Low Risk: Read-only diagnostic and reporting actions. SELECT queries, log analysis, configuration reads. The platform executes these fully autonomously and delivers the augmented report directly to the user. No human involvement required. This covers the majority of platform activity by volume.

Amber tier — Mid Risk: Non-destructive management actions with no permanent data changes. Rerunning a failed concurrent program, purging old log files, resetting a stuck workflow activity. The platform auto-executes these actions but keeps an Instant Rollback button active for a configurable window (default thirty minutes) so an administrator can undo the action without additional SQL.

Red tier — High Risk: Any action that modifies production data, changes system configuration, or executes DDL-level commands. The platform stops. It does not execute. It sends the Decision Tower notification and waits.

The three-tier traffic light framework that governs every platform action. Red tier actions never execute without a signed human approval — this is enforced architecturally.

The Safe-Stop Sequence

The Safe-Stop sequence is the specific behavior the platform executes when it reaches a High-Risk action. It is not a pause button — it is a complete state preservation and human handoff protocol designed so that no information is lost, no action is taken without authorization, and the DBA who receives the notification has everything they need to make an informed decision.

When a High-Risk action is detected, n8n serializes the complete fix context to the Postgres workflow state store. This means the workflow can wait indefinitely — minutes, hours, or days — without losing the diagnostic evidence, the LLM reasoning path, the proposed SQL, or the Oracle Support Note reference. There is no timeout that auto-executes the fix if the DBA doesn't respond. The workflow holds state until a human decision is received.

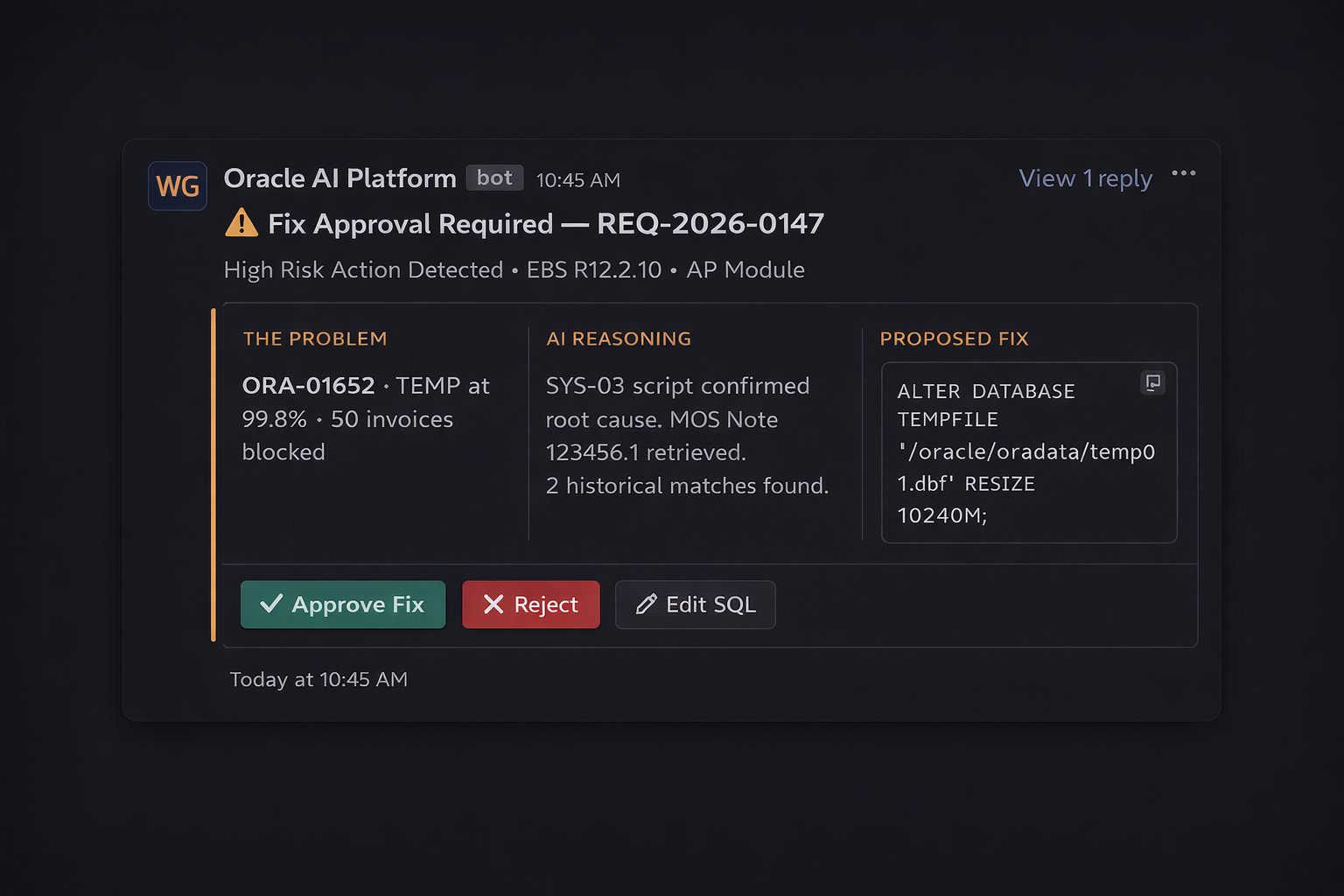

The Decision Tower notification presents three panels of evidence to the approving DBA: what is broken and how many records are affected, how the AI reached its root cause conclusion (with source citations), and the proposed fix command with a dry-run result. The DBA has three options: Approve (execute exactly as proposed), Reject (close the incident, no action taken), or Edit SQL (submit an amended command for final confirmation before execution).

The Decision Tower notification in Slack. The DBA's entire involvement: review three panels, click one button.

The Safe-Stop guarantee is not a policy document — it is implemented in the platform's access control architecture. The diagnostic Oracle connection is read-only. It cannot execute a fix even if the LLM analysis generates one. The execution connection requires a validated JWT token from the approval workflow before it activates. These are not configurable settings. They are structural constraints that cannot be bypassed by any workflow configuration change.

Why This Is Better Than Full Autonomy

The Safe-Stop model captures almost all the time savings of full autonomy while maintaining the control properties that production Oracle environments require. Consider the ORA-01652 example: the DBA's involvement is reduced to reviewing a ninety-second summary and clicking Approve. The forty-five minutes of diagnostic work, Oracle Support searching, fix formulation, and documentation is handled by the platform. The DBA's contribution is the judgment call — which is exactly where their expertise belongs.

For the compliance team, every High-Risk fix has a JWT-verified approval record with the exact approver identity, the exact SQL that executed, and the before/after state. This is a stronger audit trail than most manual DBA change management processes produce, because it is generated automatically and cannot be modified after the fact.

For the Oracle team, the Safe-Stop model means that a misconfigured diagnostic — a case where the AI identified the wrong root cause — results in a rejected approval decision, not a bad fix applied to production. The human checkpoint is the last line of defense, and the Decision Tower is designed to make that checkpoint as fast and well-informed as possible.

Building AI That Earns Trust Over Time

The traffic light framework is also a trust-building mechanism. In the early weeks of a new deployment, the lead DBA might review every Amber-tier action before allowing the platform to auto-execute them. As the platform demonstrates consistent accuracy in root cause analysis and fix formulation, confidence builds. The approval rate for High-Risk fixes climbs toward 100% for common error patterns. The time spent on each Decision Tower review drops as the DBA learns to trust the evidence chain.

Full autonomy asks for trust up front, before that trust has been earned. The Safe-Stop model builds trust incrementally, through a track record of correct diagnoses and governed fixes. That is the right way to introduce AI into a production Oracle environment — not as a replacement for human judgment, but as a force multiplier for it.